Trusted Remote Execution Explained: AWS Rex for Safer AI Agents

Trusted Remote Execution, also called Rex, is an AWS open-source project that makes scripts safer. It lets humans or AI agents run helpful actions on a computer, but every action is checked against a rulebook first. If the script tries to read, write, open, or change something that the policy does not allow, Rex blocks it before the action reaches the system.

The simple idea

Think of a school lab.

A student may be allowed to use a microscope, read the experiment sheet, and write notes in a notebook. But the same student is not allowed to open the chemical cabinet or switch off the electricity for the whole building.

That is what Rex does for scripts.

The script is the student. The computer is the lab. The policy is the teacher’s rulebook.

The script can work, but only inside the rules.

Why this matters now

Scripts are small programs people use to operate systems. A script can check logs, restart a service, inspect a configuration file, or collect information when something breaks.

The old problem is simple: many scripts inherit too much power.

A script written to read a log may also have permission to delete the log. A troubleshooting script may accidentally touch production files. A command copied from a chat window may do more than the person expected.

AI agents make the problem sharper. An agent can generate code at runtime. That means the exact script may not exist until the moment it runs. Traditional review does not fit well here because there may be no pull request, no human-written runbook, and no careful approval of every system call.

Rex gives the owner of the system a harder boundary.

What Rex actually is

Rex is a scripting runtime. That means it is a controlled place where scripts run.

AWS describes it as an open-source runtime where every system operation is authorized by policy. In plain English: the script does not get direct access to the machine. It has to ask Rex for each sensitive action, and Rex checks whether that action is allowed.

Rex uses two important pieces:

Rhai for the script

Rhai is a lightweight scripting language. It does not come with open access to the host system. That matters because the script cannot simply reach into the machine and do whatever it wants.

Cedar for the policy

Cedar is a policy language. It is used to describe permissions clearly: who can do what, on which resource, and under what conditions.

So the design looks like this:

Script says: “I want to read this file.”

Policy says: “Reading this file is allowed, but writing or deleting is not.”

Rex enforces that decision.

A non-technical example

Imagine Ruby asks an AI agent:

“Check my application logs and tell me why the service failed.”

The agent writes a script that tries to:

- Read the application log

- Read the service configuration

- Restart the service

- Delete old log files

The policy may allow only the first two actions.

So Rex allows:

- read `/app/logs/error.log`

- read `/app/config/service.yaml`

And Rex blocks:

- restart the service

- delete any file

The AI agent receives an access denied error. It can then adjust and continue with only the safe actions.

This is the main point: the AI can be useful without being trusted with everything.

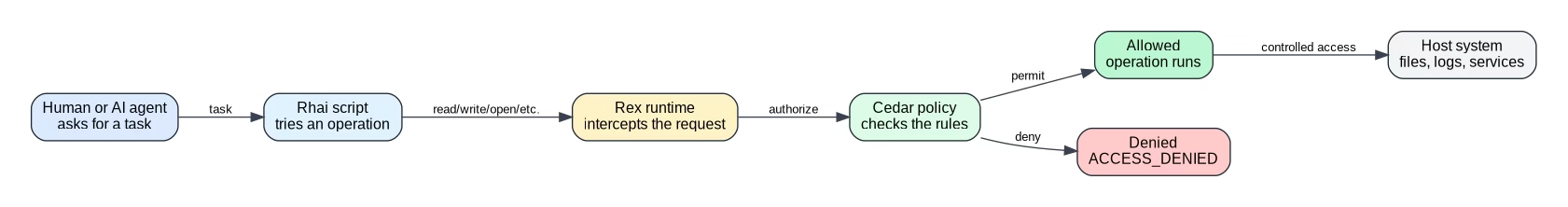

The flow in one picture

A normal script might go straight from code to operating system. Rex adds a policy checkpoint in the middle.

If the policy allows the action, Rex performs it. If not, the action stops there.

Why not just trust the AI agent?

Because trust is not a security control.

An AI agent can misunderstand instructions. It can generate a command that looks reasonable but is too broad. It can be influenced by malicious text in logs, tickets, web pages, or files. It can also hallucinate a command that does not match the real system.

Rex assumes mistakes will happen. Then it limits the damage.

That is a healthier model for production systems.

What kinds of actions could be controlled?

The AWS article mentions operations such as reading, writing, opening files, and other host interactions exposed through the Rex SDK.

In practice, a system owner could design policies around actions like:

- read only specific log folders

- write only to a temporary output folder

- block deletion completely

- allow restarting only one named service

- deny access to secrets, credentials, and private keys

- allow inspection but not modification

This turns a vague instruction like “help debug the server” into a safer contract.

How this helps humans too

This is not only for AI.

Humans also run scripts under pressure. Anyone who has handled an incident knows the feeling: the service is down, alerts are firing, and someone needs to run a fix quickly.

A policy-enforced runtime can reduce the chance that a rushed command changes the wrong thing.

It gives teams a safer way to say:

“You can run this operational script, but only inside these boundaries.”

Rex compared with a normal sandbox

A sandbox usually tries to trap the program inside a limited environment.

Rex focuses on something more specific: every operation that touches the host must pass a policy check.

That distinction is useful. The script may still reason, loop, parse data, and make decisions. But when it tries to affect the real system, Rex asks the policy first.

For AI agents, that is important. You may not be able to predict every line of code the agent will generate, but you can define what the host will allow.

Where this fits with AWS and AI agents

This idea connects directly to the larger shift toward AI agents operating cloud systems.

For example, teams are already thinking about secure agent access with tools such as AWS MCP Server, IAM guardrails, CloudTrail auditing, and Bedrock security controls. Rex adds another layer: policy checks at the moment a script tries to perform an operation.

Useful related reads on wcblog.in:

- [AWS MCP Server GA: secure AI agents for AWS teams](https://wcblog.in/aws-mcp-server-ga-secure-ai-agents-aws/)

- [AWS Bedrock Security: 8 Real Attack Paths and How to Block Them](https://wcblog.in/aws-bedrock-security-8-real-attack-paths-and-how-to-block-them/)

- [OpenClaw Architecture: How It Executes Tasks and What to Remember](https://wcblog.in/openclaw-architecture-how-it-executes-tasks-and-what-to-remember/)

What beginners should remember

If you are new to this topic, remember these five points:

- A script is a small program that performs tasks.

- AI agents can write scripts automatically.

- Automatically generated scripts can be risky.

- Rex checks every important operation against a policy.

- If the policy says no, Rex blocks the action.

That is the whole idea.

Rex is not saying, “Never let AI touch systems.” It is saying, “If AI touches systems, put a rule-enforcing gate in front of every sensitive action.”

FAQ

What is Trusted Remote Execution in AWS?

Trusted Remote Execution, or Rex, is an AWS open-source scripting runtime that checks every system operation against a policy before allowing it to run.

Why is Rex useful for AI agents?

Rex is useful because AI agents can generate scripts dynamically. If an agent writes risky code, Rex can block actions that are outside the approved policy.

What is the difference between the script and the policy?

The script describes what to do. The policy describes what is allowed. Rex sits between them and enforces the policy at runtime.

What is Cedar in Rex?

Cedar is the policy language used to define permissions. It can describe which actions are allowed on which resources and under what conditions.

What is Rhai in Rex?

Rhai is the scripting language used for Rex scripts. It is intentionally limited and does not give scripts direct system access by default.

Does Rex make AI agents completely safe?

No tool makes AI agents completely safe. Rex reduces risk by enforcing hard permission boundaries around system operations.

Final takeaway

Trusted Remote Execution is easiest to understand as a teacher’s rulebook for scripts. The script may ask to do many things, but Rex checks the rulebook first. That makes it easier to use AI agents and operational scripts without giving them unlimited power over the system.